Update to Docker

June 15, 2024 (toolchain,vcd,simulation,debugging,tracing,baremetal,C,gtkwave)

Docker Images

The reference docker images for building and simulating examples from this blog have been updated:

https://github.com/five-embeddev/build-and-verify/tree/main/docker

| Target | Description |

|---|---|

| riscv-tool-build | Base image for compiling tools |

| riscv-spike | RISC-V ISA Simulator. |

| riscv-openocd | OpenOCD JTAG debugger interface |

| riscv-xpack-gcc | X-PACK packaged GCC |

| riscv-spike-debug-sim | RISC-V Spike ISA configured to run with OpenOCD |

| riscv-spike-debug-gdb | RISC-V Spike ISA configured to run with OpenOCD & GDB |

| riscv-rust | Rust for RISC-V |

| riscv-gnu-toolchain | Build GCC for RISC-V |

| riscv-gnu-toolchain-2 | Build GCC for RISC-V (using docker compose to conserve resources) |

Docker Examples

Examples of usage:

https://github.com/five-embeddev/build-and-verify/tree/main/examples

| Example | Description |

|---|---|

| build-c/ | Build a simple C test program for host and target |

| build-rust/ | Build a small rust program |

| build-run-sim/ | Build a full baremetal example and simulate with Spike, debug with GDB |

| test-code-c/ | Small example program |

Build with Cmake

e.g. https://github.com/five-embeddev/build-and-verify/tree/main/examples/build-run-sim

docker run \

--rm \

-v .:/project \

-v /home/five/five-embeddev-wsl/build-and-verify/examples/build-run-sim/../test-code-c:/project/test_code \

fiveembeddev/riscv_xpack_gcc_dev_env:latest \

cmake \

-S test_code \

-B build \

-G "Unix Makefiles" \

-DCMAKE_TOOLCHAIN_FILE=../test_code/riscv.cmake

docker run \

--rm \

-v .:/project \

-v /home/five/five-embeddev-wsl/build-and-verify/examples/build-run-sim/../test-code-c:/project/test_code \

fiveembeddev/riscv_xpack_gcc_dev_env:latest \

make \

VERBOSE=1 \

-C build

RISC-V Software Tracing with VCD and Spike

September 02, 2022 (toolchain,vcd,simulation,debugging,tracing)

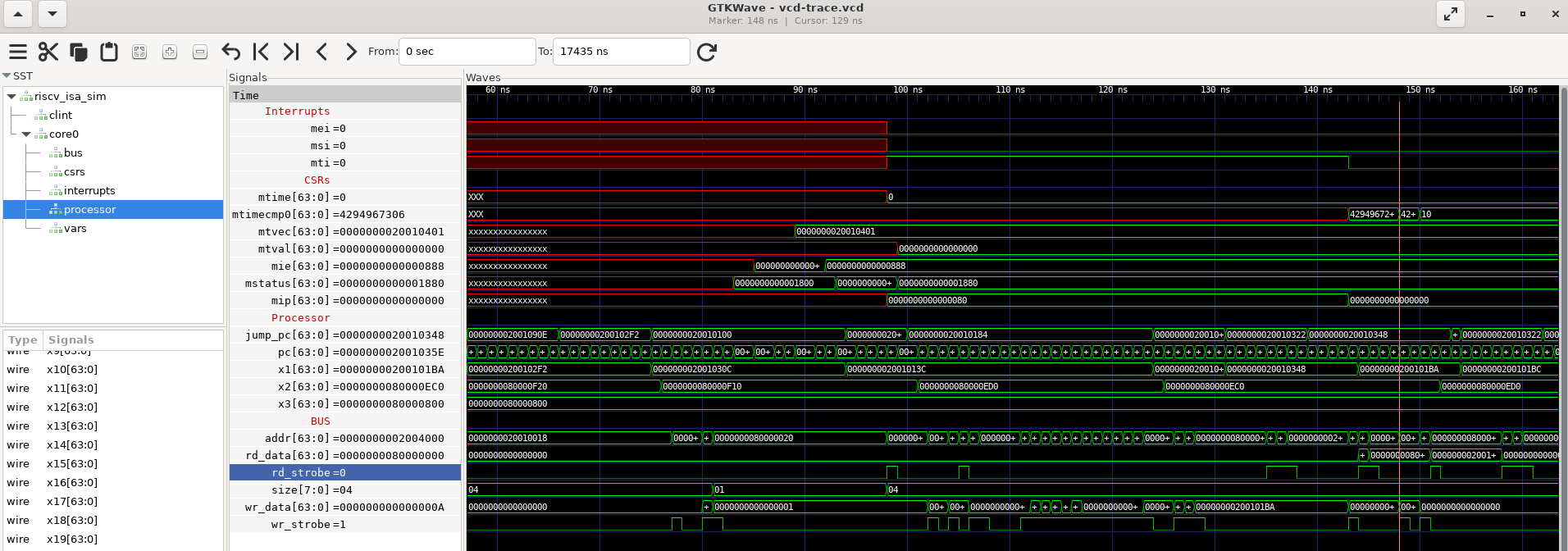

Viewing hardware and software interaction is much easier with a logic analyzer style trace. In almost every job I’ve had I’ve added a VCD tracer to the instruction set simulator. Both the “big picture” state flow can be seen, and the “little details” of bus/register/interrupt/io interaction can be seen.

For that purpose I’ve forked the RISC-V ISA Simulator, https://github.com/five-embeddev/riscv-isa-sim/tree/vcd_trace.

About the fork

This fork adds a few features.

- Tracing of registers and memory bus.

- Time limit for simulation.

- New commands to trace variables.

- Two way symbol <-> address lookup.

This is an example of a firmware writing to the mtimecmp register:

Simulating and Debugging with a Docker Containerized Toolchain

March 25, 2022 (toolchain,docker,simulation,debugging)

Containerized Development

In a previous post I used docker to containerize building for a RISC-V target.

In this post I’ll simulate that target file in a containerized RISC-V ISA Simulator.

Spike ISA Sim

The official reference ISA simulator for RISC-V is spike. There are other more functional and performant open source and commercial simulators , however the aim of this post is to describe a solution for quick and easy debugging and simulation of the low level examples on this blog.

Spike implements a functional model of the RISC-V hart(s) and the debug interface. GDB must connect to OpenOCD, which will in turn connect to Spike’s model of the RISC-V serial debug interface. Spike’s Readme file describes how to do this, but it’s pretty tedious to load an example program.

The container example downloads and builds the appropriate source code and manages running the independent tools.

Docker Images

The docker files are in https://github.com/five-embeddev/build-and-verify. Assume it’s checked out to build-and-verify.

Building with a Docker container Toolchain

March 01, 2022 (toolchain,docker)

Containerized Development

Modern development is moving towards packaging tools in containers. There are several benefits to containerizing development tools:

- A simpler tool deployment process, the binary image with all dependencies can simply be pulled from a server and run.

- An automated and consistent deployment process that can be shared between development machines and cloud based build servers.

- A consistent set of tools for all team members.

- The ability to build with General Purpose IDE’s rather than whatever tools a device vendor may provide.

- Isolation of dependencies used for different devices and build targets.

For RISC-V there are a few more benefits:

- A “recipe” to consistently build the vendor neutral tools at, such as https://github.com/riscv-collab/riscv-gnu-toolchain.

- New versions of the tools with support for new extensions etc can be easily deployed.

I’ve put together a set of Docker images and Docker Compose build and run configurations with the aim of deploying them with GitHub’s workflows.

They are located here: https://github.com/five-embeddev/build-and-verify

Extending PlatformIO

November 05, 2021 (articles,toolchain,C)

Information about extending cross compilation with PlatformIO has been added.

Machine Readable Specification Data

September 10, 2021 (registers,spec,interrupts,opcodes)

As RISC-V is a new architecture so there will be new development at all layers of the software and hardware stack. Rather than write code based on human language specifications from scratch, a smarter way to work can be to translate a machine readable specification to code.

“Machine Readable” does not need to be an all encompassing formal model of the architecture, there are many convenient formats such as csv, yaml, xml and json that can be parsed and transformed using the packages available in most scripting languages.

CMake Cross Compilation for RISC-V Targets

March 20, 2021 (articles,toolchain,baremetal,cmake)

An example of cross compiling a baremetal program to RISC-V with CMake.

RISC-V Compile Targets, GCC

February 09, 2021 (gcc,base_isa,extensions,abi)

Note to self: When compiling the riscv-toolchain for embedded systems, set the configure options!

The toolchain can be cloned from the RISC-V official github. Once the dependencies are installed it’s straight forward to compile.

$ git clone --recursive https://github.com/riscv-collab/riscv-gnu-toolchain.gitRISC-V Tools Quick Reference

October 29, 2019 (toolchain,quickref)

An initial toolchain quick reference.

RISC-V Compile Targets, GCC

June 26, 2019 (gcc,base_isa,extensions,abi)

NOTE: Since this was written the riscv-toolchain-conventions document has been released.

Getting started with RISC-V. Compiling for the RISC-V target. This post covers the GCC machine architecture (-march), ABI (-mabi) options and how they relate to the RISC-V base ISA and extensions. It also looks at the multilib configuration for GCC.

Selecting

- the base ISA,

- the extensions, and

- the target ABIs.

Five EmbedDev

Five EmbedDev